All these algorithms are a form. XGBoost, CatBoost, LightGBM and SnapBoost.

#Gradient boosting how to#

While XGBoost and LightGBM tend to have a marginal performance edge on the classic GBM, they are all based on a similar, very clever, idea about how to ensemble decision trees. In this paper, we compare four state-of-the-art gradient boosting algorithms viz.

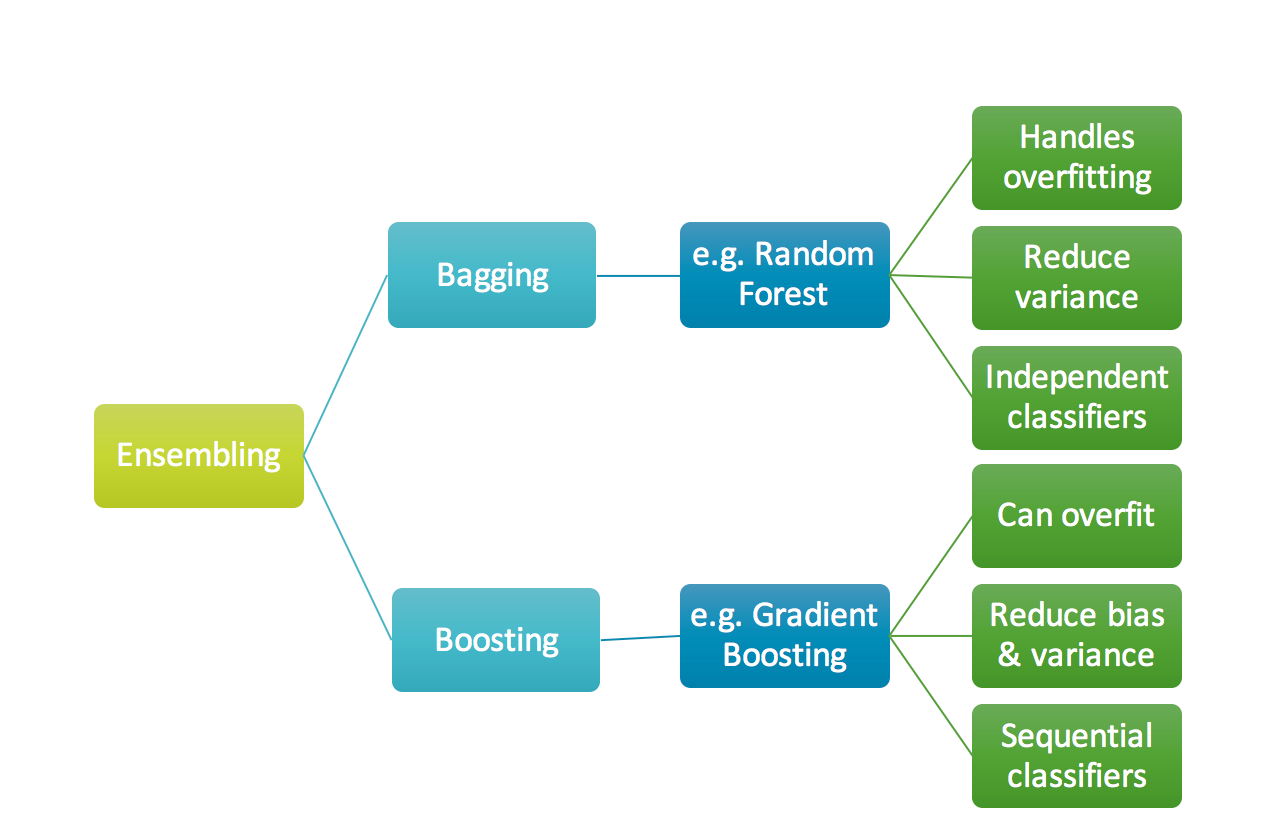

Gradient boosting classifier usually uses decision trees in model building. Gradient descent is a first-order iterative optimisation algorithm for finding a local minimum of a differentiable function. Models of a kind are popular due to their ability to classify datasets effectively. Gradient boosting re-defines boosting as a numerical optimisation problem where the objective is to minimise the loss function of the model by adding weak learners using gradient descent. There are several noteworthy variants of gradient boosting out there in the wild including XGBoost, NGBoost, LightGBM, and of course the classic gradient boosting machine (GBM). Gradient boosting classifier is a set of machine learning algorithms that include several weaker models to combine them into a strong big one with highly predictive output. These tree ensemble methods perform very well on tabular data prediction problems and are therefore widely used in industrial applications and machine learning competitions. Like its cousin random forest, gradient boosting is an ensemble technique that generates a single strong model by combining many simple models, usually decision trees. Gradient Boosting is an iterative functional gradient algorithm, i.e an algorithm which minimizes a loss function by iteratively choosing a function that points. In addition to having a totally kickass name, this family of machine learning algorithms is currently among the best known approaches for prediction problems on structured data.